前言

本文不讲原理,只关注代码,有很多博客是讲原理的,但是代码最多到畸变矫正就结束了,实际上就是到 OpenCV 官方示例涉及的部分。

在官方示例中使用黑白棋盘格求解了相机的内外参和畸变系数,并对图像做了畸变矫正,但在实际使用时还缺少很多功能,以下是本文包含的部分:

(1)按实际应用场景求解外参,棋盘格的外参是相对于棋盘格的世界坐标系的,无法直接使用;

(2)在实际场景下,不使用棋盘格,采用标点的方法求解内外参和畸变系数;

(3)世界坐标系的点转换到像素坐标系,包括畸变矫正前的图像和矫正后的图像;

(4)畸变矫正后的图像像素坐标转换到世界坐标;

(5)鱼眼相机上述相关功能;(2022.12.9 更新)

(6)上传了GitHub代码;(2023.5.4 更新)

环境:

Python 3.7

OpenCV 4.5.3.56

1. 棋盘格相机标定

这个部分代码是在官方示例上略作了修改,由于一些条件限制,棋盘格图像 chess_path 是在本地用同款相机拍摄的,实拍图像 real_path 是现场安装相机拍摄的图像。

1.1 核心代码解析

1.1.1 求内参、畸变系数、外参

retval, cameraMatrix, distCoeffs, rvecs, tvecs = cv2.calibrateCamera(objectPoints, imagePoints, imageSize, cameraMatrix, distCoeffs[, rvecs[, tvecs[, flags[, criteria]]]])# 实际使用rms, camera_matrix, dist_coefs, _rvecs, _tvecs = cv2.calibrateCamera(obj_points, img_points, (w, h), None, None)-

输入:

obj_points:棋盘格世界坐标,这里的世界坐标是以棋盘格平面自行构建的,z轴坐标都是0,xy 坐标按网格设置,世界坐标为[0,0,0], [1,0,0], …, [0,1,0], [1,1,0], …;img_points:棋盘格像素坐标,主要通过cv2.findChessboardCorners找的点位;(w, h):图像大小

-

输出:

rms:重投影误差camera_matrix:内参,[3,3]dist_coefs:畸变系数,[1, 5]_rvecs:旋转向量,长度为n的list,每一项为[3,1]的向量,n为有效棋盘格图像的数量_tvecs:平移向量,同(4)

补充说明:

(1)cv2.findChessboardCorners(image, patternSize) 在找棋盘格角点时要输入棋盘格的内角数 patternSize,例如 10×7 10\times7 10×7 的棋盘格(格子数量)内角数是 9×6 9\times6 9×6(内圈角点数量)

(2)矫正结果与输入数据(棋盘格图像)有很大关系,包括输入图像顺序、棋盘格的分布、图像数量等,这些方面官网也说并不能给出准确的建议,根据一般的经验图像数量在10张以上,棋盘格要分布在图像的各个位置,注意覆盖边角。

1.1.2 畸变矫正

方法一:

newcameramtx, roi = cv2.getOptimalNewCameraMatrix(camera_matrix, dist_coefs, (w, h), 1, (w, h))dst = cv2.undistort(self.camera_img, camera_matrix, dist_coefs, None, newcameramtx)x, y, w, h = roidst_crop = dst[y:y + h, x:x + w](1)newcameramtx, roi = cv2.getOptimalNewCameraMatrix(cameraMatrix, distCoeffs, imageSize, alpha[, newImgSize[, centerPrincipalPoint]])

-

输入:

- 主要讲下

alpha,取值范围是 [ 0 , 1 ] [0,1] [0,1],当取0时矫正后图像只保留有效区域(裁剪掉黑边、扭曲的部分);当取1时所有原图像素都保留(不做裁剪)

- 主要讲下

-

输出:

newcameramtx:矫正图像的新内参roi:矫正图像还需要裁剪的区域

(2)dst = cv2.undistort(src, cameraMatrix, distCoeffs[, dst[, newCameraMatrix]])

-

输入:

newCameraMatrix:用于调节矫正图像在原图中的范围,同时代表dst的内参,取None时默认为cameraMatrix

-

输出:

dst:矫正图像

补充说明:

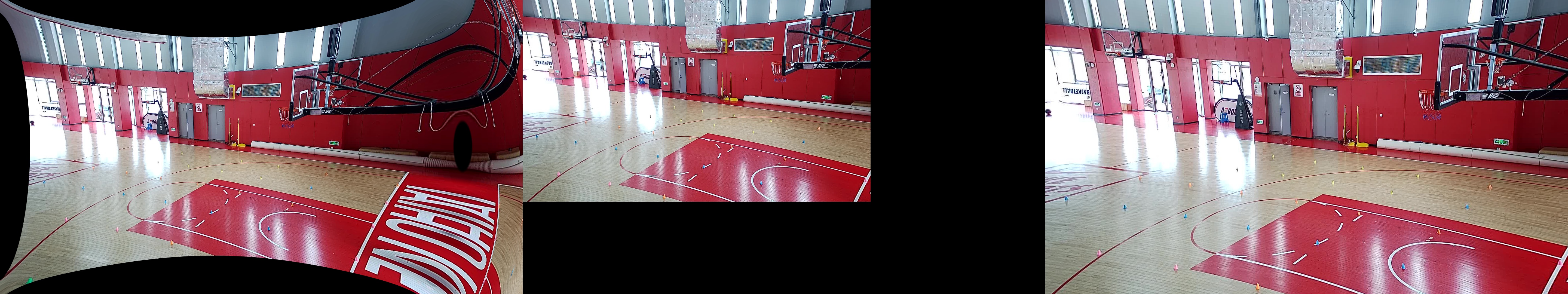

下图可以直观的感受 alpha 带来的差异;左图:alpha=1 未裁剪图像,中图:alpha=1 裁剪后图像,右图:alpha=0 图像(此时已不需要裁剪,roi=(0, 0, 1919, 1079))

方法二:

用方法一对视频进行逐帧矫正的时候发现速度比较慢,后注意官方文档中说明方法一中的 cv2.initUndistortRectifyMap() 其实是 cv2.remap() 和 cv2.undistort() 的结合。由于 map 变换是固定的,提前算好可以节省一个环节的时间,提高不少效率。

newcameramtx, roi = cv2.getOptimalNewCameraMatrix(camera_matrix, dist_coefs, (w, h), 1, (w, h))map1, map2 = cv2.initUndistortRectifyMap(camera_matrix, dist_coefs, None, newcameramtx, (w, h), cv2.CV_16SC2)dst = cv2.remap(self.camera_img, map1, map2, cv2.INTER_LINEAR)(1)map1, map2 = cv2.initUndistortRectifyMap(cameraMatrix, distCoeffs, R, newCameraMatrix, size, m1type[, map1[, map2]])

- 输入:

R:在cv2.initUndistortRectifyMap()中假定为单位矩阵m1type:CV_32FC1,CV_32FC2 或 CV_16SC2

(2)dst = cv2.remap(src, map1, map2, interpolation[, dst, borderMode, borderValue])

补充说明:

这里暂不细说 m1type 对应 map 的类型等,但使用 CV_16SC2 可以使 remap 的速度更快。

1.2 完整代码

import argparseimport osfrom glob import globimport numpy as npimport cv2def check_path(path): if not os.path.exists(path): os.mkdir(path)def splitfn(fn): path, fn = os.path.split(fn) name, ext = os.path.splitext(fn) return path, name, extdef calibrate(args): check_path(args.out_path) out_chess_path = os.path.join(args.out_path, "output") check_path(out_chess_path) # 棋盘格图像文件 # img_names = sorted(os.listdir(args.chess_path)) file_names = glob(os.path.join(args.chess_path, "test_??.jpg")) # 生成棋盘格世界坐标 pattern_points = np.zeros((np.prod(args.pattern_size), 3), np.float32) pattern_points[:, :2] = np.indices(args.pattern_size).T.reshape(-1, 2) pattern_points *= args.square_size obj_points = [] img_points = [] h, w = cv2.imread(file_names[0], 0).shape[:2] # 多线程获取检测棋盘格角点坐标 def processImage(fn): print('processing %s... ' % fn) img = cv2.imread(fn, 0) if img is None: print("Failed to load", fn) return None assert w == img.shape[1] and h == img.shape[0], ("size: %d x %d ... " % (img.shape[1], img.shape[0])) found, corners = cv2.findChessboardCorners(img, args.pattern_size) if found: term = (cv2.TERM_CRITERIA_EPS + cv2.TERM_CRITERIA_COUNT, 30, 0.1) cv2.cornerSubPix(img, corners, (5, 5), (-1, -1), term) vis = cv2.cvtColor(img, cv2.COLOR_GRAY2BGR) cv2.drawChessboardCorners(vis, args.pattern_size, corners, found) _path, name, _ext = splitfn(fn) outfile = os.path.join(out_chess_path, name + '_chess.png') cv2.imwrite(outfile, vis) if not found: print('chessboard not found') return None print(' %s... OK' % fn) return corners.reshape(-1, 2), pattern_points threads_num = args.threads if threads_num <= 1: chessboards = [processImage(fn) for fn in file_names] else: print("Run with %d threads..." % threads_num) from multiprocessing.dummy import Pool as ThreadPool pool = ThreadPool(threads_num) chessboards = pool.map(processImage, file_names) chessboards = [x for x in chessboards if x is not None] for (corners, pattern_points) in chessboards: img_points.append(corners) obj_points.append(pattern_points) # 计算相机畸变 rms, camera_matrix, dist_coefs, _rvecs, _tvecs = cv2.calibrateCamera(obj_points, img_points, (w, h), None, None) np.savez("calibrate_parm.npz", camera_matrix=camera_matrix, dist_coefs=dist_coefs) print("\nRMS:", rms) print("camera matrix:\n", camera_matrix) print("distortion coefficients: ", dist_coefs.ravel()) # 图像矫正 for fn in file_names: _path, name, _ext = splitfn(fn) img_found = os.path.join(out_chess_path, name + '_chess.png') outfile = os.path.join(out_chess_path, name + '_undistorted.png') outfile_crop = os.path.join(out_chess_path, name + '_undistorted_crop.png') img = cv2.imread(img_found) if img is None: continue h, w = img.shape[:2] newcameramtx, roi = cv2.getOptimalNewCameraMatrix(camera_matrix, dist_coefs, (w, h), 1, (w, h)) dst = cv2.undistort(img, camera_matrix, dist_coefs, None, newcameramtx) x, y, w, h = roi dst_crop = dst[y:y + h, x:x + w] cv2.imwrite(outfile, dst) cv2.imwrite(outfile_crop, dst_crop) print('Undistorted image written to: %s' % outfile) if args.real_path: out_real_path = os.path.join(args.out_path, "output_real") check_path(out_real_path) for file in os.listdir(args.real_path): fn = os.path.join(args.real_path, file) _path, name, _ext = splitfn(fn) outfile = os.path.join(out_real_path, name + '_undistorted.png') outfile_crop = os.path.join(out_real_path, name + '_undistorted_crop.png') real_img = cv2.imread(fn) if real_img is None: continue h, w = real_img.shape[:2] newcameramtx, roi = cv2.getOptimalNewCameraMatrix(camera_matrix, dist_coefs, (w, h), 1, (w, h)) dst = cv2.undistort(real_img, camera_matrix, dist_coefs, None, newcameramtx) x, y, w, h = roi dst_crop = dst[y:y + h, x:x + w] cv2.imwrite(outfile, dst) cv2.imwrite(outfile_crop, dst_crop) print('Undistorted real image written to: %s' % outfile)if __name__ == '__main__': parser = argparse.ArgumentParser() parser.add_argument('--chess_path', type=str, default="data/chess_img", help="棋盘格图像文件夹") parser.add_argument('--real_path', type=str, default="data/real_img", help="实拍图像文件夹") parser.add_argument('--threads', type=int, default=4) parser.add_argument('--square_size', type=float, default=1.0) parser.add_argument('--pattern_size', type=int, nargs=2, default=[9, 6], help="棋盘格尺寸") parser.add_argument('--out_path', type=str, default="run") opt = parser.parse_args() print(opt) calibrate(opt)2. 标点相机标定

2.1 已知内参求外参

最初使用棋盘格标定获取的内参和畸变系数,并且手动选择了一些图像中标志物的位置,并构建其世界坐标系,最终使用内外参映射点位时有较大的误差。很可能是同型号的相机也有着不同的内参和畸变系数,或是棋盘格矫正的效果还有待提升。

retval, rvec, tvec = cv2.solvePnP(objectPoints, imagePoints, cameraMatrix, distCoeffs[, rvec[, tvec[, useExtrinsicGuess[, flags]]]])

-

输入:

objectPoints:世界坐标img_points:像素坐标cameraMatrix:内参矩阵distCoeffs:畸变系数

-

输出:

retval:重投影误差rvec:旋转向量tvec:平移向量

2.2 世界坐标转像素坐标

基于此方法可以验证求得的参数用来做坐标转换时的误差。

imagePoints, jacobian = cv2.projectPoints(objectPoints, rvec, tvec, cameraMatrix, distCoeffs[, imagePoints[, jacobian[, aspectRatio]]] )

-

输入:

objectPoints:世界坐标rvec:旋转向量tvec:平移向量cameraMatrix:内参矩阵distCoeffs:畸变系数

-

输出:

imagePoints:像素坐标jacobian:关于旋转、平移、焦距、光心坐标、畸变的雅可比矩阵

补充说明:

当 cameraMatrix=newCameraMatrix, distCoeffs=None 时,得到的像素坐标是相对于畸变矫正后的图像 dst 的。

2.3 原图坐标转矫正图坐标

dst = cv2.undistortPoints(src, cameraMatrix, distCoeffs[, dst[, R[, P]]])# 实际使用undistortImagePoints = cv2.undistortPoints(imagePoints, cameraMatrix, distCoeffs, None, np.eye(3), newcameramtx)- 输入:

src:原图坐标cameraMatrix:内参矩阵distCoeffs:平移畸变系数

2.4 手动标点相机标定

在个人篮球场这个实用场景中,只需要在地面按一定间隔摆放标志物(类似用标志物摆出一个棋盘格),之后在拍摄的图像中手动获取标志物的像素坐标,同样可以得到相机的内外参和畸变系数。

构建世界坐标系的时候绘制一张篮球场的模板图,同样手动选点即可,z轴坐标依然是0。

下图将世界坐标系中点位映射到畸变矫正图像的像素坐标系进行验证,可以看出映射非常准确。

注意:

(1)代码中输入 cv2.calibrateCamera 的点坐标数据类型需要是 np.float32 的

(2)取点的时候并不需要 n×m n \times m n×m 这样类似网格的点,只要能一一对应即可

下面给出完整代码以供参考。

解释:

标点时鼠标左键取点,右键删点,标完后键盘按 s 进入标定。

camera_img_path:相机拍摄的图像

pattern_img_path:篮球场的模板图

camera_kpts_path:同下

pattern_kpts_path:npz文件,由于是模板图可以直接把精确的标志点位存下来,通过内部的 auto_select_kpt 函数鼠标点击附近的时候会自动选择精确的点位,便于取点,如果没有输入 None 即可

camera_select_kpts_path:同下

pattern_select_kpts_path:每次运行完后会存储所选择的点,方便之后可以直接读取,在此基础上进行增减点位等,没有同样输入 None 即可

mode:‘fisheye’ 对应鱼眼相机,否则为普通相机

import cv2.fisheyefrom common import *class App(object): def __init__(self, camera_img_path, pattern_img_path, camera_kpts_path=None, pattern_kpts_path=None, camera_select_kpts_path=None, pattern_select_kpts_path=None, save_path='output', mode=''): check_path(save_path) self.save_path = save_path self.mode = mode self.camera_window = 'camera' self.pattern_window = 'pattern' self.camera_img_path = camera_img_path self.camera_img = cv2.imread(camera_img_path) self.camera_kpts = None if camera_kpts_path is None else np.load(camera_kpts_path)['kpts'] self.pattern_img_path = pattern_img_path self.pattern_img = cv2.imread(pattern_img_path) self.pattern_kpts = None if pattern_kpts_path is None else np.load(pattern_kpts_path)['kpts'] self.pattern_kpts_select = self.read_select_kpts(pattern_select_kpts_path) self.camera_kpts_select = self.read_select_kpts(camera_select_kpts_path) @staticmethod def read_select_kpts(path): return [] if path is None else np.load(path)['kpts'].astype(np.int16).tolist() @staticmethod def auto_select_kpt(xy, kpts, dis=16): x, y = xy if kpts is None: return xy new_xy = kpts[np.argmin(np.sum((kpts - [x, y]) ** 2, 1))] if np.sum((new_xy - [x, y]) ** 2) < dis ** 2: return new_xy else: return xy @staticmethod def show_img(img, kpts, window, kpt_size=10, font_size=1): i_show = img.copy() for i, kpt in enumerate(kpts): x, y = kpt cv2.circle(i_show, (x, y), kpt_size, green, -1, 16) cv2.putText(i_show, str(i), (kpt[0] + 10, kpt[1]), cv2.FONT_HERSHEY_TRIPLEX, font_size, green, 1, cv2.LINE_AA) cv2.imshow(window, i_show) return i_show # 模板图像取点 def pattern_mouse(self, event, x, y, flags, param): if event == cv2.EVENT_LBUTTONDOWN: x, y = self.auto_select_kpt((x, y), self.pattern_kpts) self.pattern_kpts_select.append((int(x), int(y))) if event == cv2.EVENT_RBUTTONDOWN: if len(self.pattern_kpts_select): self.pattern_kpts_select.pop(-1) self.pattern_show = self.show_img( self.pattern_img, self.pattern_kpts_select, self.pattern_window, kpt_size=10, font_size=2) # 相机图像取点 def camera_mouse(self, event, x, y, flags, param): if event == cv2.EVENT_LBUTTONDOWN: x, y = self.auto_select_kpt((x, y), self.camera_kpts) self.camera_kpts_select.append((int(x), int(y))) if event == cv2.EVENT_RBUTTONDOWN: if len(self.camera_kpts_select): self.camera_kpts_select.pop(-1) self.camera_show = self.show_img( self.camera_img, self.camera_kpts_select, self.camera_window, kpt_size=5, font_size=1) def run(self): cv2.namedWindow(self.pattern_window, cv2.WINDOW_NORMAL | cv2.WINDOW_KEEPRATIO) cv2.namedWindow(self.camera_window, cv2.WINDOW_NORMAL | cv2.WINDOW_KEEPRATIO) cv2.setMouseCallback(self.pattern_window, self.pattern_mouse) cv2.setMouseCallback(self.camera_window, self.camera_mouse) while 1: self.pattern_show = self.show_img( self.pattern_img, self.pattern_kpts_select, self.pattern_window, kpt_size=10, font_size=2) self.camera_show = self.show_img( self.camera_img, self.camera_kpts_select, self.camera_window, kpt_size=5, font_size=1) key = cv2.waitKey(0) if key == ord('q'): cv2.destroyAllWindows() break if key == ord('s'): cv2.imwrite(os.path.join(self.save_path, "pattern_select_kpts.jpg"), self.pattern_show) cv2.imwrite(os.path.join(self.save_path, "camera_select_kpts.jpg"), self.camera_show) cv2.destroyAllWindows() self.img_calibrate() break def img_calibrate(self): _, name, _ = splitfn(self.camera_img_path) camera_pts = np.array(self.camera_kpts_select, dtype=np.float32) pattern_pts = np.array(self.pattern_kpts_select, dtype=np.float32) np.savez(os.path.join(self.save_path, f"{name}_pattern_select_kpts.npz"), kpts=pattern_pts) np.savez(os.path.join(self.save_path, f"{name}_camera_select_kpts.npz"), kpts=camera_pts) pattern_pts = np.concatenate((pattern_pts, np.zeros((len(self.pattern_kpts_select), 1))), axis=1).astype( np.float32) h, w = self.camera_img.shape[:2] if self.mode == 'fisheye': flags = 0 flags |= cv2.fisheye.CALIB_RECOMPUTE_EXTRINSIC flags |= cv2.fisheye.CALIB_CHECK_COND flags |= cv2.fisheye.CALIB_FIX_SKEW criteria = (cv2.TERM_CRITERIA_EPS+cv2.TERM_CRITERIA_MAX_ITER, 30, 1e-6) # retval, K, D, rvecs, tvecs rms, camera_matrix, dist_coefs, _rvecs, _tvecs = cv2.fisheye.calibrate( [pattern_pts.reshape((-1, 1, 3))], [camera_pts.reshape((-1, 1, 2))], (w, h), None, None, None, None, flags=flags, criteria=criteria) newcameramtx = cv2.fisheye.estimateNewCameraMatrixForUndistortRectify( camera_matrix, dist_coefs, (w, h), None, None, 0, (w, h)) dst = cv2.fisheye.undistortImage( self.camera_img, camera_matrix, dist_coefs, None, newcameramtx, new_size=(w, h)) else: rms, camera_matrix, dist_coefs, _rvecs, _tvecs = cv2.calibrateCamera( [pattern_pts], [camera_pts], (w, h), None, None) newcameramtx, roi = cv2.getOptimalNewCameraMatrix(camera_matrix, dist_coefs, (w, h), 1, (w, h)) dst = cv2.undistort(self.camera_img, camera_matrix, dist_coefs, None, newcameramtx) x, y, w, h = roi dst_crop = dst[y:y + h, x:x + w] cv2.namedWindow("result_crop", cv2.WINDOW_NORMAL | cv2.WINDOW_KEEPRATIO) cv2.imshow("result_crop", dst_crop) cv2.imwrite(os.path.join(self.save_path, f"{name}_result_crop.jpg"), dst_crop) print(rms) np.savez(os.path.join(self.save_path, f"{name}_calibrate_parm.npz"), camera_matrix=camera_matrix, dist_coefs=dist_coefs, _rvecs=_rvecs, _tvecs=_tvecs) cv2.namedWindow("result", cv2.WINDOW_NORMAL | cv2.WINDOW_KEEPRATIO) cv2.imshow("result", dst) cv2.waitKey(0) cv2.destroyAllWindows() cv2.imwrite(os.path.join(self.save_path, f"{name}_result.jpg"), dst)# 实拍图像+模板图像+手动选点 --> 存储选点、内外参畸变系数calibration = App( camera_img_path="data/camera/nike_top_2.jpeg", pattern_img_path="data/pattern/court3.jpg", camera_kpts_path=None, pattern_kpts_path="data/pattern/court3_kpts.npz", camera_select_kpts_path="data/camera/nike_top_2_camera_select_kpts.npz", pattern_select_kpts_path="data/camera/nike_top_2_pattern_select_kpts.npz", mode='fisheye')calibration.run()# common.pyimport osimport cv2import numpy as npwhite = (255, 255, 255)black = (0, 0, 0)blue = (255, 0, 0)green = (0, 255, 0)red = (0, 0, 255)def check_path(path): if not os.path.exists(path): os.mkdir(path)def splitfn(fn): path, fn = os.path.split(fn) name, ext = os.path.splitext(fn) return path, name, extdef kpts_hom_tran(kpts, hom): """ :param kpts: nx2 原始点坐标 :param hom: 3x3 单应性矩阵 :return: nx2 变换后坐标 """ kpts_tran = np.matmul(hom, kpts.T).T return kpts_tran / kpts_tran[:, 2:]3. 像素坐标转世界坐标(伪)

首先,2D的像素坐标是无法转换成3D的世界坐标的,这很好理解,因为像素坐标缺少深度信息,同样的像素可近可远,意味着对应着世界坐标系中无数点。

但是,针对个人的需求,只要把篮球场地面的点转到世界坐标就行了,所以想到了在矫正后的图像上算个单应性矩阵,就能方便的把2D坐标转3D。从原理来看应该能把求得的外参转换成单应性矩阵,尚未实际验证。

"梳理一下流程""1. 用标点的方法获取到了原图和模板图的对应点坐标"camera_img, camera_kptspattern_img, pattern_kpts"2. 求内外参和畸变系数"rms, camera_matrix, dist_coefs, _rvecs, _tvecs = cv2.calibrateCamera(pattern_kpts, camera_kpts, (w, h), None, None)"3. 畸变矫正, 注意alpha取0, 得到的dst是无需裁剪的矫正图像"newcameramtx, roi = cv2.getOptimalNewCameraMatrix(camera_matrix, dist_coefs, (w, h), 0, (w, h))dst = cv2.undistort(self.camera_img, camera_matrix, dist_coefs, None, newcameramtx)"4. 世界坐标点转换到矫正图像上, 如下左图"kpts_map, _ = cv2.projectPoints(pattern_pts, _rvecs, _tvecs, newcameramtx, None)kpts_map = kpts_map.reshape(-1, 2)"5. 求解矫正图像到模板图像的单应性矩阵"hom, status = cv2.findHomography(kpts_map, pattern_pts, cv2.RANSAC, 5)"6. 矫正图像的点利用单应性变换转到模板图上, 如下中图, kpts_hom_tran在2.3节的common.py源码中"dst_kpts = np.concatenate((kpts_map, np.ones((len(kpts_map), 1))), axis=1).astype(np.float32)dst2pattern_kpts = kpts_hom_tran(dst_kpts, hom)"7. 矫正图像投影到模板图上并叠加显示, 如下右图, 可隐约看出标志物也在对应的点位上"h, w = pattern_img.shape[:2]dst_homo = cv2.warpPerspective(dst, hom, (w, h))dst_homo = cv2.addWeighted(dst_homo, 0.7, pattern_img, 0.3, 0)

4. 世界坐标系

补充一下关于世界坐标系的体会,虽然在许多原理说明的文章中世界坐标的单位都是m,但实际上只要在世界中的比例是对的,单位并不绝对。

例如在篮球场的模板图我是按1厘米1个像素画的,即篮球场尺寸是15x28,模板图的分辨率是1500x2800,在取点的时候也是直接用像素坐标作为世界坐标,即模板图左上角为原点,向右为X轴正方向,向下为Y轴正方向,所得的世界坐标其实是以cm为单位的。尽管如此求得各个参数用来做坐标映射是完全没问题的,但还是以m为单位构建世界坐标比较好,如此求得的参数会相对通用,不会因为世界坐标系的尺度不统一而对参数进行处理。

5. 鱼眼、普通相机API汇总对比

以上述篮球场为例,假设已获得原始图像坐标与模板图坐标(世界坐标)

camera_img:(h, w, 3)pattern_img:(hp, wp, 3)camera_pts:(n, 2) float32pattern_pts:(n, 3) float32'1. 求参数'rms, camera_matrix, dist_coefs, _rvecs, _tvecs = cv2.calibrateCamera([pattern_pts], [camera_pts], (w, h), None, None)rms, K, D, _rvecs, _tvecs = cv2.fisheye.calibrate([pattern_pts.reshape((-1, 1, 3))], [camera_pts.reshape((-1, 1, 2))], (w, h), None, None)'2. 求新内参'newcameramtx, roi = cv2.getOptimalNewCameraMatrix(camera_matrix, dist_coefs, (w, h), 0, (w, h))newcameramtx = cv2.fisheye.estimateNewCameraMatrixForUndistortRectify(K, D, (w, h), None, None, 0, (w, h))'3. 畸变矫正'dst = cv2.undistort(camera_img, camera_matrix, dist_coefs, None, newcameramtx)dst = cv2.fisheye.undistortImage(camera_img, K, D, None, newcameramtx, new_size=(w, h))'4. 畸变矫正remap方式, 用CV_16SC2更快'map1, map2 = cv2.initUndistortRectifyMap(camera_matrix, dist_coefs, None, newcameramtx, (w, h), cv2.CV_16SC2)map1, map2 = cv2.fisheye.initUndistortRectifyMap(K, D, np.eye(3), newcameramtx, (w, h), cv2.CV_16SC2)dst = cv2.remap(camera_img, map1, map2, cv2.INTER_LINEAR)'5. 世界坐标转像素坐标(原图)'pattern2camera_pts, _ = cv2.projectPoints(pattern_pts, _rvecs, _tvecs, camera_matrix, dist_coefs)pattern2camera_pts, _ = cv2.fisheye.projectPoints(pattern_pts.reshape((-1, 1, 3)), _rvecs, _tvecs, K, D)'6. 世界坐标转像素坐标(矫正图)'pattern2dst_pts, _ = cv2.projectPoints(pattern_pts, _rvecs, _tvecs, newcameramtx, None)'7. 原图坐标转矫正图坐标'camera2dst_pts = cv2.undistortPoints(camera_pts, camera_matrix, dist_coefs, None, np.eye(3), newcameramtx)camera2dst_pts = cv2.fisheye.undistortPoints(camera_pts.reshape((-1, 1, 2)), K, D, None, np.eye(3), newcameramtx)6. 关于报错

本文仅对用到过的 API 做个记录,全部功能还是要看 OpenCV官方文档。建议先根据需求浏览前面的 Functions 部分,根据功能描述找到可能适用的 API 再查看后面 Function Documentation 内的详细介绍。

官方文档对 Python 支持比较差,尤其是输入数据的格式不清晰,常见报错例如:

(1)retval, cameraMatrix, distCoeffs, rvecs, tvecs = cv2.calibrateCamera(objectPoints, imagePoints, imageSize, cameraMatrix, distCoeffs[, rvecs[, tvecs[, flags[, criteria]]]])

- error: (-210:Unsupported format or combination of formats) objectPoints should contain vector of vectors of points of type Point3f in function ‘collectCalibrationData’

- error: (-210:Unsupported format or combination of formats) imagePoints1 should contain vector of vectors of points of type Point2f in function ‘collectCalibrationData’

这两个报错是 objectPoints 和 imagePoints 的数据类型不对,必须用 float32,而 numpy 默认通常是 float64,并且需要把 (n,3/2) 的矩阵放到一个 list 中,或 reshape 成 (1,n,3/2)。建议还是仿照官方示例放到列表中。

(2)retval, K, D, rvecs, tvecs = cv2.fisheye.calibrate(objectPoints, imagePoints, image_size, K, D[, rvecs[, tvecs[, flags[, criteria]]]])

- error: (-215:Assertion failed) objectPoints.type() == CV_32FC3 || objectPoints.type() == CV_64FC3 in function ‘calibrate’

- error: (-215:Assertion failed) imagePoints.type() == CV_32FC2 || imagePoints.type() == CV_64FC2 in function ‘calibrate’

这两个报错同样是 objectPoints 和 imagePoints 的数据类型不对,此处 float32 和 float64 都可以,但需要把 (n,3/2) 的矩阵 reshape 成 (n,1,3/2) 再放到一个 list 中。

看了很久的官方文档,只能确定数据类型,如 Point3f 中的 f 对应 float 是32位的,64位是 Point3d 对应 double;CV_32FC3 中 32F 对应32位浮点,C3 对应通道数3(3D坐标)。但是数据的维度找不到明确的说明,只能靠查看教程代码中的用法。

来源地址:https://blog.csdn.net/weixin_43605641/article/details/128109213