这篇文章主要介绍了Python如何通过Scrapy框架实现爬取百度新冠疫情数据,具有一定借鉴价值,感兴趣的朋友可以参考下,希望大家阅读完这篇文章之后大有收获,下面让小编带着大家一起了解一下。

环境部署

主要简单推荐一下

插件推荐

这里先推荐一个Google Chrome的扩展插件xpath helper,可以验证xpath语法是不是正确。

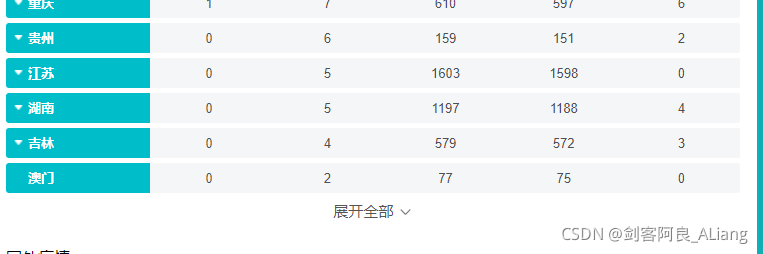

爬虫目标

需要爬取的页面:实时更新:新型冠状病毒肺炎疫情地图

主要爬取的目标选取了全国的数据以及各个身份的数据。

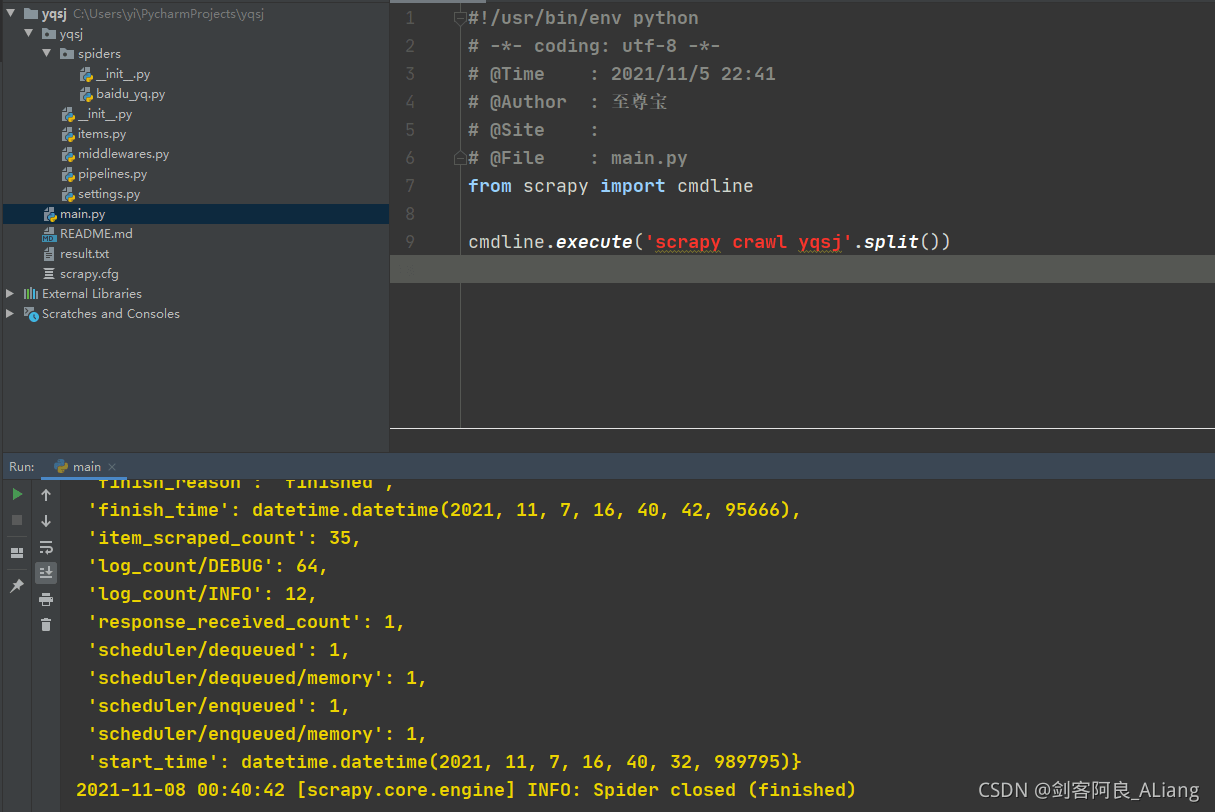

项目创建

使用scrapy命令创建项目

scrapy startproject yqsjwebdriver部署

这里就不重新讲一遍了,可以参考我这篇文章的部署方法:Python 详解通过Scrapy框架实现爬取CSDN全站热榜热词流程

项目代码

开始撸代码,看一下百度疫情省份数据的问题。

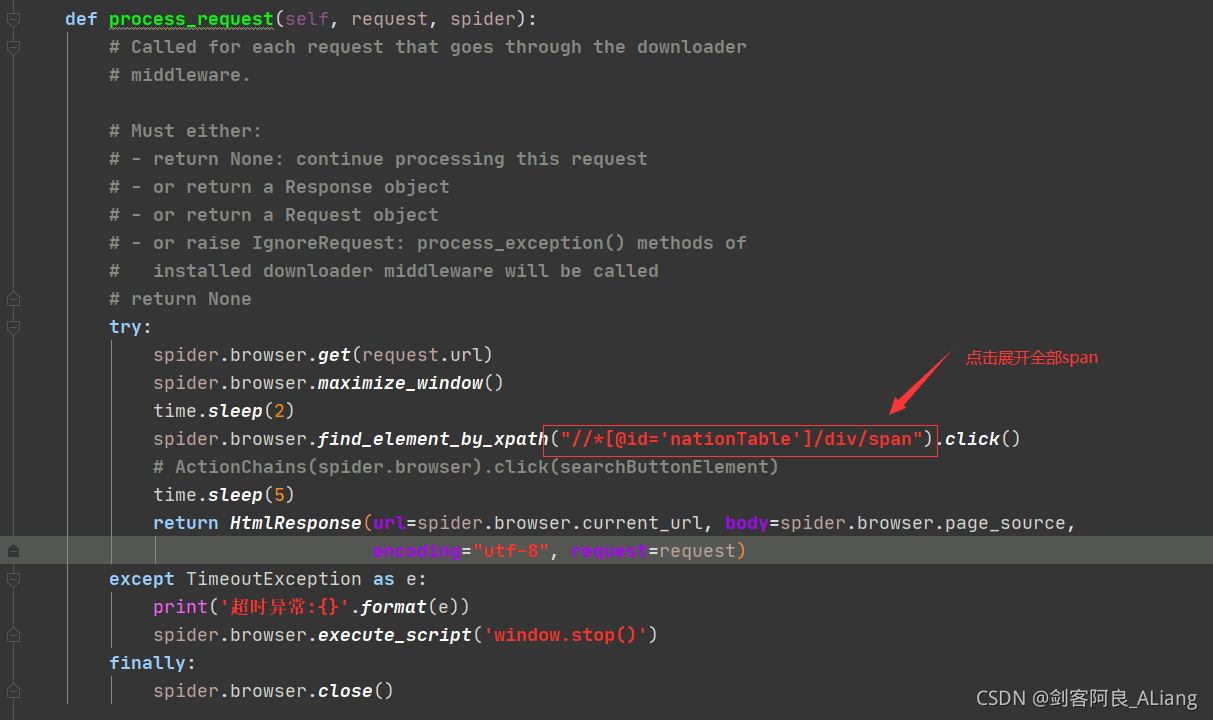

页面需要点击展开全部span。所以在提取页面源码的时候需要模拟浏览器打开后,点击该按钮。所以按照这个方向,我们一步步来。

Item定义

定义两个类YqsjProvinceItem和YqsjChinaItem,分别定义国内省份数据和国内数据。

# Define here the models for your scraped items## See documentation in:# https://docs.scrapy.org/en/latest/topics/items.html import scrapy class YqsjProvinceItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() location = scrapy.Field() new = scrapy.Field() exist = scrapy.Field() total = scrapy.Field() cure = scrapy.Field() dead = scrapy.Field() class YqsjChinaItem(scrapy.Item): # define the fields for your item here like: # name = scrapy.Field() # 现有确诊 exist_diagnosis = scrapy.Field() # 无症状 asymptomatic = scrapy.Field() # 现有疑似 exist_suspecte = scrapy.Field() # 现有重症 exist_severe = scrapy.Field() # 累计确诊 cumulative_diagnosis = scrapy.Field() # 境外输入 overseas_input = scrapy.Field() # 累计治愈 cumulative_cure = scrapy.Field() # 累计死亡 cumulative_dead = scrapy.Field()中间件定义

需要打开页面后点击一下展开全部。

完整代码

# Define here the models for your spider middleware## See documentation in:# https://docs.scrapy.org/en/latest/topics/spider-middleware.html from scrapy import signals # useful for handling different item types with a single interfacefrom itemadapter import is_item, ItemAdapterfrom scrapy.http import HtmlResponsefrom selenium.common.exceptions import TimeoutExceptionfrom selenium.webdriver import ActionChainsimport time class YqsjSpiderMiddleware: # Not all methods need to be defined. If a method is not defined, # scrapy acts as if the spider middleware does not modify the # passed objects. @classmethod def from_crawler(cls, crawler): # This method is used by Scrapy to create your spiders. s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def process_spider_input(self, response, spider): # Called for each response that goes through the spider # middleware and into the spider. # Should return None or raise an exception. return None def process_spider_output(self, response, result, spider): # Called with the results returned from the Spider, after # it has processed the response. # Must return an iterable of Request, or item objects. for i in result: yield i def process_spider_exception(self, response, exception, spider): # Called when a spider or process_spider_input() method # (from other spider middleware) raises an exception. # Should return either None or an iterable of Request or item objects. pass def process_start_requests(self, start_requests, spider): # Called with the start requests of the spider, and works # similarly to the process_spider_output() method, except # that it doesn't have a response associated. # Must return only requests (not items). for r in start_requests: yield r def spider_opened(self, spider): spider.logger.info('Spider opened: %s' % spider.name) class YqsjDownloaderMiddleware: # Not all methods need to be defined. If a method is not defined, # scrapy acts as if the downloader middleware does not modify the # passed objects. @classmethod def from_crawler(cls, crawler): # This method is used by Scrapy to create your spiders. s = cls() crawler.signals.connect(s.spider_opened, signal=signals.spider_opened) return s def process_request(self, request, spider): # Called for each request that goes through the downloader # middleware. # Must either: # - return None: continue processing this request # - or return a Response object # - or return a Request object # - or raise IgnoreRequest: process_exception() methods of # installed downloader middleware will be called # return None try: spider.browser.get(request.url) spider.browser.maximize_window() time.sleep(2) spider.browser.find_element_by_xpath("/*;q=0.8', 'Accept-Language': 'en', 'User-Agent': 'Mozilla/5.0 (Windows NT 6.2; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/27.0.1453.94 Safari/537.36'} # Enable or disable spider middlewares# See https://docs.scrapy.org/en/latest/topics/spider-middleware.htmlSPIDER_MIDDLEWARES = { 'yqsj.middlewares.YqsjSpiderMiddleware': 543,} # Enable or disable downloader middlewares# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.htmlDOWNLOADER_MIDDLEWARES = { 'yqsj.middlewares.YqsjDownloaderMiddleware': 543,} # Enable or disable extensions# See https://docs.scrapy.org/en/latest/topics/extensions.html#EXTENSIONS = {# 'scrapy.extensions.telnet.TelnetConsole': None,#} # Configure item pipelines# See https://docs.scrapy.org/en/latest/topics/item-pipeline.htmlITEM_PIPELINES = { 'yqsj.pipelines.YqsjPipeline': 300,} # Enable and configure the AutoThrottle extension (disabled by default)# See https://docs.scrapy.org/en/latest/topics/autothrottle.html#AUTOTHROTTLE_ENABLED = True# The initial download delay#AUTOTHROTTLE_START_DELAY = 5# The maximum download delay to be set in case of high latencies#AUTOTHROTTLE_MAX_DELAY = 60# The average number of requests Scrapy should be sending in parallel to# each remote server#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0# Enable showing throttling stats for every response received:#AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default)# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings#HTTPCACHE_ENABLED = True#HTTPCACHE_EXPIRATION_SECS = 0#HTTPCACHE_DIR = 'httpcache'#HTTPCACHE_IGNORE_HTTP_CODES = []#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'验证结果

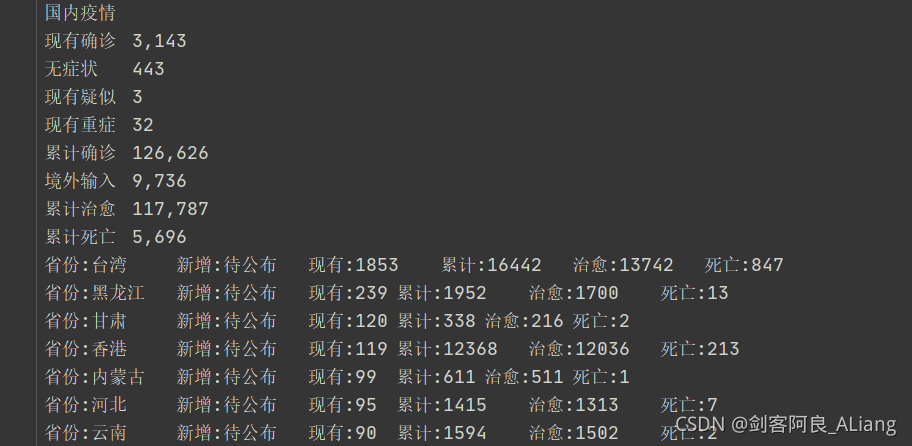

看看结果文件

感谢你能够认真阅读完这篇文章,希望小编分享的“Python如何通过Scrapy框架实现爬取百度新冠疫情数据”这篇文章对大家有帮助,同时也希望大家多多支持编程网,关注编程网行业资讯频道,更多相关知识等着你来学习!