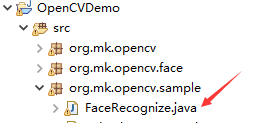

这篇文章主要介绍了怎么用Java+OpenCV调用摄像头实现拍照功能的相关知识,内容详细易懂,操作简单快捷,具有一定借鉴价值,相信大家阅读完这篇怎么用Java+OpenCV调用摄像头实现拍照功能文章都会有所收获,下面我们一起来看看吧。

环境准备

准备好一个USB外接摄像头,我这边使用的有两种,一种是普通的罗技摄像头,一种是双目摄像头(将来用来做活检);

eclipse 2021-12版;

JDK 11+,因为我们编写swing要使用到Window Builder窗体设计器这个插件。在eclipse 2021-12版里要驱动Windows Builder窗体设计器我们必须要用JDK11及+;

使用Windows10环境编程。当然我们也可以使用Mac,但是Mac下如果是JAVA驱动摄像头有一个这样的梗:那就是直接你在eclipse里无法直接调用摄像头,它会报一个“This app has crashed because it attempted to access privacy-sensitive data without a usage description”或者是

OpenCV: not authorized to capture video (status 0), requesting...

OpenCV: can not spin main run loop from other thread, set OPENCV_AVFOUNDATION_SKIP_AUTH=1 to disable authorization request and perform it in your application.

OpenCV: camera failed to properly initialize

这样的错误,这些错误都是因为Mac OS的权限问题导致,它意指你在Mac下没权限去调用Mac内置的一些设备。如果你用的是XCode写Swift那么你可以通过info.plist来解决此问题。但因为是eclipse里启动java main函数,目前在Mac OS下无法解决eclipse内运行驱动Mac外设这一类问题。如果你在Mac OS内,要运行OpenCV Java并驱动摄像头,你必须把项目打成可执行的jar包并且在command窗口下用java -jar 这样的命令去启动它。在启动时你的Mac OS会提示你给这个command窗口要授权,请点击【是】并且用指纹或者是密码授权,然后再次在command窗口运行java -jar opencv应用,你就可以在Mac OS下使用java去驱动摄像头了。因此这为我们的编码调试带来极大的不便,这就是为什么我们使用Windows10环境下开发opencv java的主要原因。

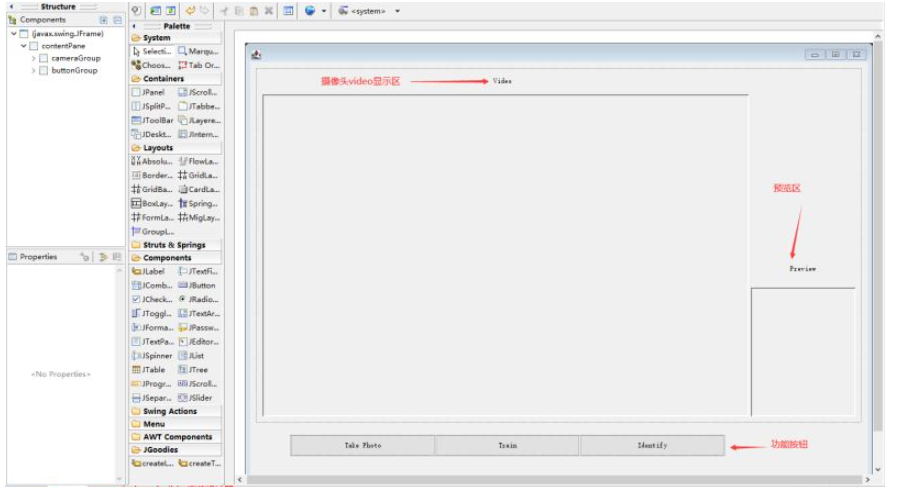

制作主界面

我们的主界面是一个Java Swing的JFrame应用,它长成这个样子

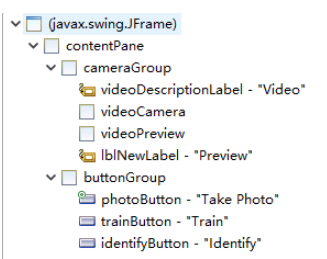

整体结构介绍

我们把屏幕分成上下两个区域,布局使用的是1024*768,带有点击关闭按钮即关闭程序的自由布局:

setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

setBounds(100, 100, 1024, 768);

contentPane = new JPanel();

contentPane.setBorder(new EmptyBorder(5, 5, 5, 5));

setContentPane(contentPane);contentPane.setLayout(null);上部区域

我们使用一个JPanel来分组叫cameraGroup,这个JPanel也是自由布局

JPanel cameraGroup = new JPanel();

cameraGroup.setBounds(10, 10, 988, 580);

contentPane.add(cameraGroup);

cameraGroup.setLayout(null);然后在这个cameraGroup以左大右小,放置了两个额外的JPanel:

videoCamera

videoPreview

其中的videoCamera是自定义的JPanel

protected static VideoPanel videoCamera = new VideoPanel();它是用来显示摄像头开启时不断的把摄像头内取到的图像“刷”到JPanel上显示用的,代码如下:

package org.mk.opencv;

import java.awt.*;

import java.awt.image.BufferedImage;

import javax.swing.*;

import org.mk.opencv.util.ImageUtils;

import org.mk.opencv.util.OpenCVUtil;

import org.opencv.core.Mat;

public class VideoPanel extends JPanel {

private Image image;

public void setImageWithMat(Mat mat) {

image = OpenCVUtil.matToBufferedImage(mat);

this.repaint();

}

public void SetImageWithImg(Image img) {

image = img;

}

public Mat getMatFromImage() {

Mat faceMat = new Mat();

BufferedImage bi = ImageUtils.toBufferedImage(image);

faceMat = OpenCVUtil.bufferedImageToMat(bi);

return faceMat;

}

@Override

protected void paintComponent(Graphics g) {

super.paintComponent(g);

if (image != null)

g.drawImage(image, 0, 0, image.getWidth(null), image.getHeight(null), this);

}

public static VideoPanel show(String title, int width, int height, int open) {

JFrame frame = new JFrame(title);

if (open == 0) {

frame.setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

} else {

frame.setDefaultCloseOperation(JFrame.DISPOSE_ON_CLOSE);

}

frame.setSize(width, height);

frame.setBounds(0, 0, width, height);

VideoPanel videoPanel = new VideoPanel();

videoPanel.setSize(width, height);

frame.setContentPane(videoPanel);

frame.setVisible(true);

return videoPanel;

}

}下部区域

下部区域我们放置了一个buttonGroup。这个buttonGroup用的是“网袋布局”,上面放置三个按钮。

JPanel buttonGroup = new JPanel();

buttonGroup.setBounds(65, 610, 710, 35);

contentPane.add(buttonGroup);

buttonGroup.setLayout(new GridLayout(1, 0, 0, 0));今天我们就要实现photoButton里的功能。

今天我们就要实现photoButton里的功能。

说完了布局下面进入核心代码讲解。

核心代码与知识点讲解

(最后会上全代码)

JPanel中如何显示摄像头的图像

JPanel这种组件一般是套在JFrame的contentPanel里的(这是用图形化设计器生成的JFrame自带的一个用来“盛”其它组件的容器)。

contentPane大家可以认为是一种容器。它一般是这样的一层关系:

JFrame(我们的主类)->contentPane->我们自己的上半部JPanel->videoCamera(JPanel)。

在Java Swing里有一个方法叫repaint()方法,这个方法 一旦被调用,这个组件的“子组件”内的

protected void paintComponent(Graphics g)都会自动被依次调用一遍。

因此,我们才自定义了一个JPanel叫VideoPanel,然后我们覆写了它里面的paintComponent方法

@Override

protected void paintComponent(Graphics g) {

super.paintComponent(g);

if (image != null)

g.drawImage(image, 0, 0, image.getWidth(null), image.getHeight(null), this);

}这样,我们在我们的主类“FaceRecognize”里在通过摄像头得到了图像后把图像通过VideoPanel里的“setImageWithMat”方法set后,马上调用FaceRecognize自自的repaint方法,然后“父”事件一路向下传导,依次逐级把子组件进行“刷新”-子组件的paintComponent都会被触发一遍。

摄像头得到图像显示在videoCamera区域的过程就是:

不断通过FaceRecognize类里通过摄像头读到Mat对象;

把Mat对象set到VideoPanel里;

不断调用FaceRecognize里的repaint方法迫使VideoPanel里“刷新”出摄像头拍的内容;

每显示一次,sleep(50毫秒);

为了取得良好的刷新、连续不断的显示效果,你可以把上述方法套在一个“单线程”内。

OpenCV调用摄像头

OpenCV是使用以下这个类来驱动摄像头的。

private static VideoCapture capture = new VideoCapture();然后打开摄像头,读入摄像头内容如下

capture.open(0);

Scalar color = new Scalar(0, 255, 0);

MatOfRect faces = new MatOfRect();

if (capture.isOpened()) {

logger.info(">>>>>>video camera in working");

Mat faceMat = new Mat();

while (true) {

capture.read(faceMat);

if (!faceMat.empty()) {

faceCascade.detectMultiScale(faceMat, faces);

Rect[] facesArray = faces.toArray();

if (facesArray.length >= 1) {

for (int i = 0; i < facesArray.length; i++) {

Imgproc.rectangle(faceMat, facesArray[i].tl(), facesArray[i].br(), color, 2);

videoPanel.setImageWithMat(faceMat);

frame.repaint();

}

}

} else {

logger.info(">>>>>>not found anyinput");

break;

}

Thread.sleep(80);

}

}通过上述代码我们可以看到我上面描述的4步。

capture.open(0)代表读取你的计算机当前连接的第1个摄像头,如果在mac上运行这一句一些mac都带有内嵌摄像头的,因此这一句代码就会驱动mac的默认内置摄像头;

if(capture.isOpened()),必须要有,很多网上教程跳过了这一步检测,导致摄像头一直不出内容其实最后才知道是摄像头驱动有误或者坏了,而不是代码问题,最终耗费了太多的排错时间,其实结果是换一个摄像头就好了;

while(true)后跟着capture.read(faceMat),这一句就是不断的读取摄像头的内容,并把摄像头的内容读到一个Mat对象中去;

前面说了,为了让这个过程更“顺滑”、“丝滑”,我把这个过程套到了一个单线程里让它单独运行以不阻塞Java Swing的主界面。同时用“绿色”的方框把人脸在画面里“框”出来。为此我制作了一个函数如下:

public void invokeCamera(JFrame frame, VideoPanel videoPanel) {

new Thread() {

public void run() {

CascadeClassifier faceCascade = new CascadeClassifier();

faceCascade.load(cascadeFileFullPath);

try {

capture.open(0);

Scalar color = new Scalar(0, 255, 0);

MatOfRect faces = new MatOfRect();

// Mat faceFrames = new Mat();

if (capture.isOpened()) {

logger.info(">>>>>>video camera in working");

Mat faceMat = new Mat();

while (true) {

capture.read(faceMat);

if (!faceMat.empty()) {

faceCascade.detectMultiScale(faceMat, faces);

Rect[] facesArray = faces.toArray();

if (facesArray.length >= 1) {

for (int i = 0; i < facesArray.length; i++) {

Imgproc.rectangle(faceMat, facesArray[i].tl(), facesArray[i].br(), color, 2);

videoPanel.setImageWithMat(faceMat);

frame.repaint();

// videoPanel.repaint();

}

}

} else {

logger.info(">>>>>>not found anyinput");

break;

}

Thread.sleep(80);

}

}

} catch (Exception e) {

logger.error("invoke camera error: " + e.getMessage(), e);

}

}

}.start();

}配合上我们的main方法就是这样用的:

public static void main(String[] args) {

FaceRecognize frame = new FaceRecognize();

frame.setVisible(true);

frame.invokeCamera(frame, videoCamera);

}使用摄像头拍照

这一章节我们在OpenCV Java入门四 认出这是“一张脸”里其实已经讲过了,就是把一个Mat输出到一个jpg文件中。

在本篇章节中,我们为了做得效果好一点会做这么几件事:

等比例把摄像头拿到的Mat对象缩到“videoPreview”上;

把摄像头当前的Mat输出到外部文件;

把上述过程也套到了一个单线程里以不阻塞主类的显示界面;

等比例缩放图片

位于ImageUtils类,它得到一个Mat,然后转成java.awt.Image对象;

再利用Image里的AffineTransformOp根据ratio(图像原比例)基于指定尺寸(宽:165, 高:200)的等比例缩放。再把Image转成BufferedImage;

再把BufferedImage转回Mat给到FaceRecognize主类用来作VideoPanel的“显示”来显示到我们的preview区域,而preview区域其实也是用到了VideoPanel这个类来声明的;

为此我们对photoButton进行事件编程

JButton photoButton = new JButton("Take Photo");

photoButton.addActionListener(new ActionListener() {

public void actionPerformed(ActionEvent e) {

logger.info(">>>>>>take photo performed");

StringBuffer photoPathStr = new StringBuffer();

photoPathStr.append(photoPath);

try {

if (capture.isOpened()) {

Mat myFace = new Mat();

while (true) {

capture.read(myFace);

if (!myFace.empty()) {

Image previewImg = ImageUtils.scale2(myFace, 165, 200, true);// 等比例缩放

TakePhotoProcess takePhoto = new TakePhotoProcess(photoPath.toString(), myFace);

takePhoto.start();// 照片写盘

videoPreview.SetImageWithImg(previewImg);// 在预览界面里显示等比例缩放的照片

videoPreview.repaint();// 让预览界面重新渲染

break;

}

}

}

} catch (Exception ex) {

logger.error(">>>>>>take photo error: " + ex.getMessage(), ex);

}

}

});TakePhotoProcess是一个单线程,代码如下:

package org.mk.opencv.sample;

import org.apache.log4j.Logger;

import org.opencv.core.Mat;

import org.opencv.core.Scalar;

import org.opencv.imgcodecs.Imgcodecs;

public class TakePhotoProcess extends Thread {

private static Logger logger = Logger.getLogger(TakePhotoProcess.class);

private String imgPath;

private Mat faceMat;

private final static Scalar color = new Scalar(0, 0, 255);

public TakePhotoProcess(String imgPath, Mat faceMat) {

this.imgPath = imgPath;

this.faceMat = faceMat;

}

public void run() {

try {

long currentTime = System.currentTimeMillis();

StringBuffer samplePath = new StringBuffer();

samplePath.append(imgPath).append(currentTime).append(".jpg");

Imgcodecs.imwrite(samplePath.toString(), faceMat);

logger.info(">>>>>>write image into->" + samplePath.toString());

} catch (Exception e) {

logger.error(e.getMessage(), e);

}

}

}另外两个按钮“trainButton”和"identifyButton"我们留到后面2个篇章里去讲,我们一步一步来,这样大家才能夯实基础。

最终这个FaceRecognize运行起来,然后点击photoButton后的效果如下图所示:

完整代码

OpenCVUtil.java

package org.mk.opencv.util;

import java.awt.Image;

import java.awt.image.BufferedImage;

import java.awt.image.DataBufferByte;

import java.util.ArrayList;

import java.util.LinkedList;

import java.util.List;

import java.io.File;

import org.apache.log4j.Logger;

import org.opencv.core.CvType;

import org.opencv.core.Mat;

public class OpenCVUtil {

private static Logger logger = Logger.getLogger(OpenCVUtil.class);

public static Image matToImage(Mat matrix) {

int type = BufferedImage.TYPE_BYTE_GRAY;

if (matrix.channels() > 1) {

type = BufferedImage.TYPE_3BYTE_BGR;

}

int bufferSize = matrix.channels() * matrix.cols() * matrix.rows();

byte[] buffer = new byte[bufferSize];

matrix.get(0, 0, buffer); // 获取所有的像素点

BufferedImage image = new BufferedImage(matrix.cols(), matrix.rows(), type);

final byte[] targetPixels = ((DataBufferByte) image.getRaster().getDataBuffer()).getData();

System.arraycopy(buffer, 0, targetPixels, 0, buffer.length);

return image;

}

public static List<String> getFilesFromFolder(String folderPath) {

List<String> fileList = new ArrayList<String>();

File f = new File(folderPath);

if (f.isDirectory()) {

File[] files = f.listFiles();

for (File singleFile : files) {

fileList.add(singleFile.getPath());

}

}

return fileList;

}

public static String randomFileName() {

StringBuffer fn = new StringBuffer();

fn.append(System.currentTimeMillis()).append((int) (System.currentTimeMillis() % (10000 - 1) + 1))

.append(".jpg");

return fn.toString();

}

public static List<FileBean> getPicFromFolder(String rootPath) {

List<FileBean> fList = new ArrayList<FileBean>();

int fileNum = 0, folderNum = 0;

File file = new File(rootPath);

if (file.exists()) {

LinkedList<File> list = new LinkedList<File>();

File[] files = file.listFiles();

for (File file2 : files) {

if (file2.isDirectory()) {

// logger.info(">>>>>>文件夹:" + file2.getAbsolutePath());

list.add(file2);

folderNum++;

} else {

// logger.info(">>>>>>文件:" + file2.getAbsolutePath());

FileBean f = new FileBean();

String fileName = file2.getName();

String suffix = fileName.substring(fileName.lastIndexOf(".") + 1);

File fParent = new File(file2.getParent());

String parentFolderName = fParent.getName();

f.setFileFullPath(file2.getAbsolutePath());

f.setFileType(suffix);

f.setFolderName(parentFolderName);

fList.add(f);

fileNum++;

}

}

File temp_file;

while (!list.isEmpty()) {

temp_file = list.removeFirst();

files = temp_file.listFiles();

for (File file2 : files) {

if (file2.isDirectory()) {

// System.out.println("文件夹:" + file2.getAbsolutePath());

list.add(file2);

folderNum++;

} else {

// logger.info(">>>>>>文件:" + file2.getAbsolutePath());

FileBean f = new FileBean();

String fileName = file2.getName();

String suffix = fileName.substring(fileName.lastIndexOf(".") + 1);

File fParent = new File(file2.getParent());

String parentFolderName = fParent.getName();

f.setFileFullPath(file2.getAbsolutePath());

f.setFileType(suffix);

f.setFolderName(parentFolderName);

fList.add(f);

fileNum++;

}

}

}

} else {

logger.info(">>>>>>文件不存在!");

}

// logger.info(">>>>>>文件夹共有:" + folderNum + ",文件共有:" + fileNum);

return fList;

}

public static BufferedImage matToBufferedImage(Mat matrix) {

int cols = matrix.cols();

int rows = matrix.rows();

int elemSize = (int) matrix.elemSize();

byte[] data = new byte[cols * rows * elemSize];

int type;

matrix.get(0, 0, data);

switch (matrix.channels()) {

case 1:

type = BufferedImage.TYPE_BYTE_GRAY;

break;

case 3:

type = BufferedImage.TYPE_3BYTE_BGR;

// bgr to rgb

byte b;

for (int i = 0; i < data.length; i = i + 3) {

b = data[i];

data[i] = data[i + 2];

data[i + 2] = b;

}

break;

default:

return null;

}

BufferedImage image2 = new BufferedImage(cols, rows, type);

image2.getRaster().setDataElements(0, 0, cols, rows, data);

return image2;

}

public static Mat bufferedImageToMat(BufferedImage bi) {

Mat mat = new Mat(bi.getHeight(), bi.getWidth(), CvType.CV_8UC3);

byte[] data = ((DataBufferByte) bi.getRaster().getDataBuffer()).getData();

mat.put(0, 0, data);

return mat;

}

}ImageUtils.java

package org.mk.opencv.util;

import java.awt.Color;

import java.awt.Graphics;

import java.awt.Graphics2D;

import java.awt.GraphicsConfiguration;

import java.awt.GraphicsDevice;

import java.awt.GraphicsEnvironment;

import java.awt.HeadlessException;

import java.awt.Image;

import java.awt.Transparency;

import java.awt.geom.AffineTransform;

import java.awt.image.AffineTransformOp;

import java.awt.image.BufferedImage;

import java.io.File;

import java.io.IOException;

import javax.imageio.ImageIO;

import javax.swing.ImageIcon;

import org.opencv.core.Mat;

public class ImageUtils {

public static String IMAGE_TYPE_GIF = "gif";// 图形交换格式

public static String IMAGE_TYPE_JPG = "jpg";// 联合照片专家组

public static String IMAGE_TYPE_JPEG = "jpeg";// 联合照片专家组

public static String IMAGE_TYPE_BMP = "bmp";// 英文Bitmap(位图)的简写,它是Windows操作系统中的标准图像文件格式

public static String IMAGE_TYPE_PNG = "png";// 可移植网络图形

public static String IMAGE_TYPE_PSD = "psd";// Photoshop的专用格式Photoshop

public final synchronized static Image scale2(Mat mat, int height, int width, boolean bb) throws Exception {

// boolean flg = false;

Image itemp = null;

try {

double ratio = 0.0; // 缩放比例

// File f = new File(srcImageFile);

// BufferedImage bi = ImageIO.read(f);

BufferedImage bi = OpenCVUtil.matToBufferedImage(mat);

itemp = bi.getScaledInstance(width, height, bi.SCALE_SMOOTH);

// 计算比例

// if ((bi.getHeight() > height) || (bi.getWidth() > width)) {

// flg = true;

if (bi.getHeight() > bi.getWidth()) {

ratio = Integer.valueOf(height).doubleValue() / bi.getHeight();

} else {

ratio = Integer.valueOf(width).doubleValue() / bi.getWidth();

}

AffineTransformOp op = new AffineTransformOp(AffineTransform.getScaleInstance(ratio, ratio), null);

itemp = op.filter(bi, null);

// }

if (bb) {// 补白

BufferedImage image = new BufferedImage(width, height, BufferedImage.TYPE_INT_RGB);

Graphics2D g = image.createGraphics();

g.setColor(Color.white);

g.fillRect(0, 0, width, height);

if (width == itemp.getWidth(null))

g.drawImage(itemp, 0, (height - itemp.getHeight(null)) / 2, itemp.getWidth(null),

itemp.getHeight(null), Color.white, null);

else

g.drawImage(itemp, (width - itemp.getWidth(null)) / 2, 0, itemp.getWidth(null),

itemp.getHeight(null), Color.white, null);

g.dispose();

itemp = image;

}

// if (flg)

// ImageIO.write((BufferedImage) itemp, "JPEG", new File(result));

} catch (Exception e) {

throw new Exception("scale2 error: " + e.getMessage(), e);

}

return itemp;

}

public static BufferedImage toBufferedImage(Image image) {

if (image instanceof BufferedImage) {

return (BufferedImage) image;

}

// 此代码确保在图像的所有像素被载入

image = new ImageIcon(image).getImage();

// 如果图像有透明用这个方法

// boolean hasAlpha = hasAlpha(image);

// 创建一个可以在屏幕上共存的格式的bufferedimage

BufferedImage bimage = null;

GraphicsEnvironment ge = GraphicsEnvironment.getLocalGraphicsEnvironment();

try {

// 确定新的缓冲图像类型的透明度

int transparency = Transparency.OPAQUE;

// if (hasAlpha) {

transparency = Transparency.BITMASK;

// }

// 创造一个bufferedimage

GraphicsDevice gs = ge.getDefaultScreenDevice();

GraphicsConfiguration gc = gs.getDefaultConfiguration();

bimage = gc.createCompatibleImage(image.getWidth(null), image.getHeight(null), transparency);

} catch (HeadlessException e) {

// 系统不会有一个屏幕

}

if (bimage == null) {

// 创建一个默认色彩的bufferedimage

int type = BufferedImage.TYPE_INT_RGB;

// int type = BufferedImage.TYPE_3BYTE_BGR;//by wang

// if (hasAlpha) {

type = BufferedImage.TYPE_INT_ARGB;

// }

bimage = new BufferedImage(image.getWidth(null), image.getHeight(null), type);

}

// 把图像复制到bufferedimage上

Graphics g = bimage.createGraphics();

// 把图像画到bufferedimage上

g.drawImage(image, 0, 0, null);

g.dispose();

return bimage;

}

}FileBean.java

package org.mk.opencv.util;

import java.io.Serializable;

public class FileBean implements Serializable {

private String fileFullPath;

private String folderName;

private String fileType;

public String getFileType() {

return fileType;

}

public void setFileType(String fileType) {

this.fileType = fileType;

}

public String getFileFullPath() {

return fileFullPath;

}

public void setFileFullPath(String fileFullPath) {

this.fileFullPath = fileFullPath;

}

public String getFolderName() {

return folderName;

}

public void setFolderName(String folderName) {

this.folderName = folderName;

}

}VideoPanel.java

package org.mk.opencv;

import java.awt.*;

import java.awt.image.BufferedImage;

import javax.swing.*;

import org.mk.opencv.util.ImageUtils;

import org.mk.opencv.util.OpenCVUtil;

import org.opencv.core.Mat;

public class VideoPanel extends JPanel {

private Image image;

public void setImageWithMat(Mat mat) {

image = OpenCVUtil.matToBufferedImage(mat);

this.repaint();

}

public void SetImageWithImg(Image img) {

image = img;

}

public Mat getMatFromImage() {

Mat faceMat = new Mat();

BufferedImage bi = ImageUtils.toBufferedImage(image);

faceMat = OpenCVUtil.bufferedImageToMat(bi);

return faceMat;

}

@Override

protected void paintComponent(Graphics g) {

super.paintComponent(g);

if (image != null)

g.drawImage(image, 0, 0, image.getWidth(null), image.getHeight(null), this);

}

public static VideoPanel show(String title, int width, int height, int open) {

JFrame frame = new JFrame(title);

if (open == 0) {

frame.setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

} else {

frame.setDefaultCloseOperation(JFrame.DISPOSE_ON_CLOSE);

}

frame.setSize(width, height);

frame.setBounds(0, 0, width, height);

VideoPanel videoPanel = new VideoPanel();

videoPanel.setSize(width, height);

frame.setContentPane(videoPanel);

frame.setVisible(true);

return videoPanel;

}

}TakePhotoProcess.java

package org.mk.opencv.sample;

import org.apache.log4j.Logger;

import org.opencv.core.Mat;

import org.opencv.core.Scalar;

import org.opencv.imgcodecs.Imgcodecs;

public class TakePhotoProcess extends Thread {

private static Logger logger = Logger.getLogger(TakePhotoProcess.class);

private String imgPath;

private Mat faceMat;

private final static Scalar color = new Scalar(0, 0, 255);

public TakePhotoProcess(String imgPath, Mat faceMat) {

this.imgPath = imgPath;

this.faceMat = faceMat;

}

public void run() {

try {

long currentTime = System.currentTimeMillis();

StringBuffer samplePath = new StringBuffer();

samplePath.append(imgPath).append(currentTime).append(".jpg");

Imgcodecs.imwrite(samplePath.toString(), faceMat);

logger.info(">>>>>>write image into->" + samplePath.toString());

} catch (Exception e) {

logger.error(e.getMessage(), e);

}

}

}FaceRecognize.java(核心主类)

package org.mk.opencv.sample;

import java.awt.EventQueue;

import javax.swing.JFrame;

import javax.swing.JPanel;

import javax.swing.border.EmptyBorder;

import org.apache.log4j.Logger;

import org.mk.opencv.VideoPanel;

import org.mk.opencv.face.TakePhotoProcess;

import org.mk.opencv.util.ImageUtils;

import org.mk.opencv.util.OpenCVUtil;

import org.opencv.core.Core;

import org.opencv.core.Mat;

import org.opencv.core.MatOfRect;

import org.opencv.core.Rect;

import org.opencv.core.Scalar;

import org.opencv.core.Size;

import org.opencv.imgproc.Imgproc;

import org.opencv.objdetect.CascadeClassifier;

import org.opencv.videoio.VideoCapture;

import javax.swing.border.BevelBorder;

import javax.swing.JLabel;

import javax.swing.SwingConstants;

import java.awt.GridLayout;

import java.awt.Image;

import java.awt.event.ActionEvent;

import java.awt.event.ActionListener;

import javax.swing.JButton;

public class FaceRecognize extends JFrame {

static {

System.loadLibrary(Core.NATIVE_LIBRARY_NAME);

}

private static Logger logger = Logger.getLogger(FaceRecognize.class);

private static final String cascadeFileFullPath = "D:\\opencvinstall\\build\\install\\etc\\lbpcascades\\lbpcascade_frontalface.xml";

private static final String photoPath = "D:\\opencv-demo\\face\\";

private JPanel contentPane;

protected static VideoPanel videoCamera = new VideoPanel();

private static final Size faceSize = new Size(165, 200);

private static VideoCapture capture = new VideoCapture();

public static void main(String[] args) {

FaceRecognize frame = new FaceRecognize();

frame.setVisible(true);

frame.invokeCamera(frame, videoCamera);

}

public void invokeCamera(JFrame frame, VideoPanel videoPanel) {

new Thread() {

public void run() {

CascadeClassifier faceCascade = new CascadeClassifier();

faceCascade.load(cascadeFileFullPath);

try {

capture.open(0);

Scalar color = new Scalar(0, 255, 0);

MatOfRect faces = new MatOfRect();

// Mat faceFrames = new Mat();

if (capture.isOpened()) {

logger.info(">>>>>>video camera in working");

Mat faceMat = new Mat();

while (true) {

capture.read(faceMat);

if (!faceMat.empty()) {

faceCascade.detectMultiScale(faceMat, faces);

Rect[] facesArray = faces.toArray();

if (facesArray.length >= 1) {

for (int i = 0; i < facesArray.length; i++) {

Imgproc.rectangle(faceMat, facesArray[i].tl(), facesArray[i].br(), color, 2);

videoPanel.setImageWithMat(faceMat);

frame.repaint();

// videoPanel.repaint();

}

}

} else {

logger.info(">>>>>>not found anyinput");

break;

}

Thread.sleep(80);

}

}

} catch (Exception e) {

logger.error("invoke camera error: " + e.getMessage(), e);

}

}

}.start();

}

public FaceRecognize() {

setDefaultCloseOperation(JFrame.EXIT_ON_CLOSE);

setBounds(100, 100, 1024, 768);

contentPane = new JPanel();

contentPane.setBorder(new EmptyBorder(5, 5, 5, 5));

setContentPane(contentPane);

contentPane.setLayout(null);

JPanel cameraGroup = new JPanel();

cameraGroup.setBounds(10, 10, 988, 580);

contentPane.add(cameraGroup);

cameraGroup.setLayout(null);

JLabel videoDescriptionLabel = new JLabel("Video");

videoDescriptionLabel.setHorizontalAlignment(SwingConstants.CENTER);

videoDescriptionLabel.setBounds(0, 10, 804, 23);

cameraGroup.add(videoDescriptionLabel);

videoCamera.setBorder(new BevelBorder(BevelBorder.LOWERED, null, null, null, null));

videoCamera.setBounds(10, 43, 794, 527);

cameraGroup.add(videoCamera);

// JPanel videoPreview = new JPanel();

VideoPanel videoPreview = new VideoPanel();

videoPreview.setBorder(new BevelBorder(BevelBorder.LOWERED, null, null, null, null));

videoPreview.setBounds(807, 359, 171, 211);

cameraGroup.add(videoPreview);

JLabel lblNewLabel = new JLabel("Preview");

lblNewLabel.setHorizontalAlignment(SwingConstants.CENTER);

lblNewLabel.setBounds(807, 307, 171, 42);

cameraGroup.add(lblNewLabel);

JPanel buttonGroup = new JPanel();

buttonGroup.setBounds(65, 610, 710, 35);

contentPane.add(buttonGroup);

buttonGroup.setLayout(new GridLayout(1, 0, 0, 0));

JButton photoButton = new JButton("Take Photo");

photoButton.addActionListener(new ActionListener() {

public void actionPerformed(ActionEvent e) {

logger.info(">>>>>>take photo performed");

StringBuffer photoPathStr = new StringBuffer();

photoPathStr.append(photoPath);

try {

if (capture.isOpened()) {

Mat myFace = new Mat();

while (true) {

capture.read(myFace);

if (!myFace.empty()) {

Image previewImg = ImageUtils.scale2(myFace, 165, 200, true);// 等比例缩放

TakePhotoProcess takePhoto = new TakePhotoProcess(photoPath.toString(), myFace);

takePhoto.start();// 照片写盘

videoPreview.SetImageWithImg(previewImg);// 在预览界面里显示等比例缩放的照片

videoPreview.repaint();// 让预览界面重新渲染

break;

}

}

}

} catch (Exception ex) {

logger.error(">>>>>>take photo error: " + ex.getMessage(), ex);

}

}

});

buttonGroup.add(photoButton);

JButton trainButton = new JButton("Train");

buttonGroup.add(trainButton);

JButton identifyButton = new JButton("Identify");

buttonGroup.add(identifyButton);

}

}关于“怎么用Java+OpenCV调用摄像头实现拍照功能”这篇文章的内容就介绍到这里,感谢各位的阅读!相信大家对“怎么用Java+OpenCV调用摄像头实现拍照功能”知识都有一定的了解,大家如果还想学习更多知识,欢迎关注编程网行业资讯频道。