这篇文章主要讲解了“C++中内存池的原理及实现方法是什么”,文中的讲解内容简单清晰,易于学习与理解,下面请大家跟着小编的思路慢慢深入,一起来研究和学习“C++中内存池的原理及实现方法是什么”吧!

为什么要用内存池

C++程序默认的内存管理(new,delete,malloc,free)会频繁地在堆上分配和释放内存,导致性能的损失,产生大量的内存碎片,降低内存的利用率。默认的内存管理因为被设计的比较通用,所以在性能上并不能做到极致。

因此,很多时候需要根据业务需求设计专用内存管理器,便于针对特定数据结构和使用场合的内存管理,比如:内存池。

内存池原理

内存池的思想是,在真正使用内存之前,预先申请分配一定数量、大小预设的内存块留作备用。当有新的内存需求时,就从内存池中分出一部分内存块,若内存块不够再继续申请新的内存,当内存释放后就回归到内存块留作后续的复用,使得内存使用效率得到提升,一般也不会产生不可控制的内存碎片。

内存池设计

算法原理:

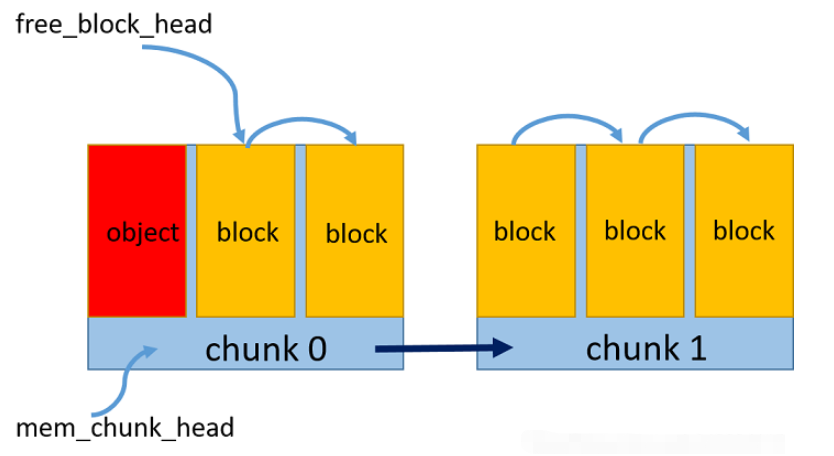

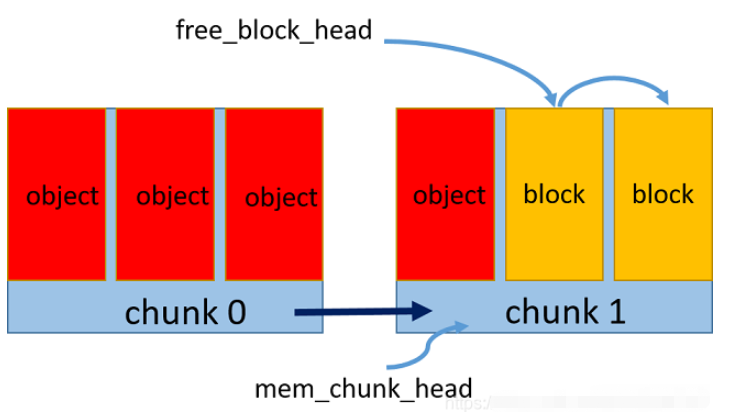

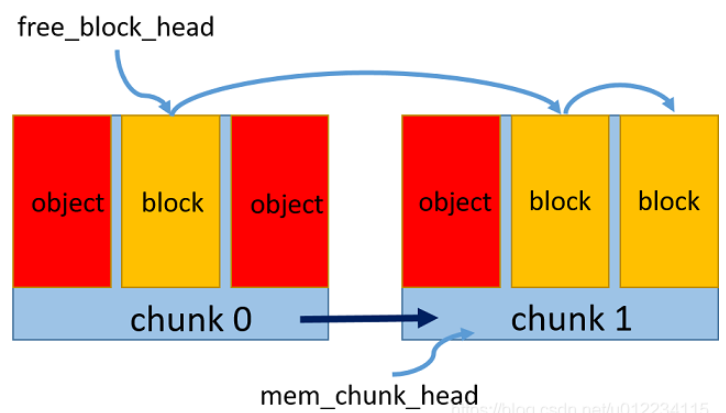

预申请一个内存区chunk,将内存中按照对象大小划分成多个内存块block

维持一个空闲内存块链表,通过指针相连,标记头指针为第一个空闲块

每次新申请一个对象的空间,则将该内存块从空闲链表中去除,更新空闲链表头指针

每次释放一个对象的空间,则重新将该内存块加到空闲链表头

如果一个内存区占满了,则新开辟一个内存区,维持一个内存区的链表,同指针相连,头指针指向最新的内存区,新的内存块从该区内重新划分和申请

如图所示:

内存池实现

memory_pool.hpp

#ifndef _MEMORY_POOL_H_#define _MEMORY_POOL_H_#include <stdint.h>#include <mutex>template<size_t BlockSize, size_t BlockNum = 10>class MemoryPool{public:MemoryPool(){std::lock_guard<std::mutex> lk(mtx); // avoid race condition// init empty memory pointerfree_block_head = NULL;mem_chunk_head = NULL;}~MemoryPool(){std::lock_guard<std::mutex> lk(mtx); // avoid race condition// destruct automaticallyMemChunk* p;while (mem_chunk_head){p = mem_chunk_head->next;delete mem_chunk_head;mem_chunk_head = p;}}void* allocate(){std::lock_guard<std::mutex> lk(mtx); // avoid race condition// allocate one object memory// if no free block in current chunk, should create new chunkif (!free_block_head){// malloc mem chunkMemChunk* new_chunk = new MemChunk;new_chunk->next = NULL;// set this chunk's first block as free block headfree_block_head = &(new_chunk->blocks[0]);// link the new chunk's all blocksfor (int i = 1; i < BlockNum; i++)new_chunk->blocks[i - 1].next = &(new_chunk->blocks[i]);new_chunk->blocks[BlockNum - 1].next = NULL; // final block next is NULLif (!mem_chunk_head)mem_chunk_head = new_chunk;else{// add new chunk to chunk listmem_chunk_head->next = new_chunk;mem_chunk_head = new_chunk;}}// allocate the current free block to the objectvoid* object_block = free_block_head;free_block_head = free_block_head->next; return object_block;}void* allocate(size_t size){std::lock_guard<std::mutex> lk(array_mtx); // avoid race condition for continuous memory// calculate objects numint n = size / BlockSize;// allocate n objects in continuous memory// FIXME: make sure n > 0void* p = allocate();for (int i = 1; i < n; i++)allocate();return p;}void deallocate(void* p){std::lock_guard<std::mutex> lk(mtx); // avoid race condition// free object memoryFreeBlock* block = static_cast<FreeBlock*>(p);block->next = free_block_head; // insert the free block to headfree_block_head = block;}private:// free node block, every block size exactly can contain one objectstruct FreeBlock{unsigned char data[BlockSize];FreeBlock* next;};FreeBlock* free_block_head;// memory chunk, every chunk contains blocks number with fixed BlockNumstruct MemChunk{FreeBlock blocks[BlockNum];MemChunk* next;};MemChunk* mem_chunk_head;// thread safe relatedstd::mutex mtx;std::mutex array_mtx;};#endif // !_MEMORY_POOL_H_main.cpp

#include <iostream>#include "memory_pool.hpp"class MyObject{public:MyObject(int x): data(x){//std::cout << "contruct object" << std::endl;}~MyObject(){//std::cout << "destruct object" << std::endl;}int data;// override new and delete to use memory poolvoid* operator new(size_t size);void operator delete(void* p);void* operator new[](size_t size);void operator delete[](void* p);};// define memory pool with block size as class sizeMemoryPool<sizeof(MyObject), 3> gMemPool;void* MyObject::operator new(size_t size){//std::cout << "new object space" << std::endl;return gMemPool.allocate();}void MyObject::operator delete(void* p){//std::cout << "free object space" << std::endl;gMemPool.deallocate(p);}void* MyObject::operator new[](size_t size){// TODO: not supported continuous memoery pool for now//return gMemPool.allocate(size);return NULL;}void MyObject::operator delete[](void* p){// TODO: not supported continuous memoery pool for now//gMemPool.deallocate(p);}int main(int argc, char* argv[]){MyObject* p1 = new MyObject(1);std::cout << "p1 " << p1 << " " << p1->data<< std::endl;MyObject* p2 = new MyObject(2);std::cout << "p2 " << p2 << " " << p2->data << std::endl;delete p2;MyObject* p3 = new MyObject(3);std::cout << "p3 " << p3 << " " << p3->data << std::endl;MyObject* p4 = new MyObject(4);std::cout << "p4 " << p4 << " " << p4->data << std::endl;MyObject* p5 = new MyObject(5);std::cout << "p5 " << p5 << " " << p5->data << std::endl;MyObject* p6 = new MyObject(6);std::cout << "p6 " << p6 << " " << p6->data << std::endl;delete p1;delete p2;//delete p3;delete p4;delete p5;delete p6;getchar();return 0;}运行结果

p1 00000174BEDE0440 1

p2 00000174BEDE0450 2

p3 00000174BEDE0450 3

p4 00000174BEDE0460 4

p5 00000174BEDD5310 5

p6 00000174BEDD5320 6

可以看到内存地址是连续,并且回收一个节点后,依然有序地开辟内存

对象先开辟内存再构造,先析构再释放内存

注意

在内存分配和释放的环节需要加锁来保证线程安全

还没有实现对象数组的分配和释放

感谢各位的阅读,以上就是“C++中内存池的原理及实现方法是什么”的内容了,经过本文的学习后,相信大家对C++中内存池的原理及实现方法是什么这一问题有了更深刻的体会,具体使用情况还需要大家实践验证。这里是编程网,小编将为大家推送更多相关知识点的文章,欢迎关注!